Tesla drivers using 'full self-driving' Autopilot pay less attention to their surroundings

US authorities criticise Tesla's roll-out of new tech

TESLA Motors is due to launch the latest version of its Autopilot “full self-driving” technology to owners this autumn, but US motoring standards authorities have expressed concerns and new research has revealed that the system could be making drivers inattentive to their surroundings.

A study by the Massachusetts Institute of Technology (MIT) used equipment to monitor head and eye movement of drivers, both with Autopilot engaged and when driving normally, and its data revealed that there were far more glances towards the cabin of the car than out of the windows when Autopilot was engaged. While it’s perhaps no surprise that Autopilot causes drivers to be less attentive, the study revealed the extent of the distraction that results.

Drivers tended to look down towards the car’s centre console, in keeping with the kind of glances made when looking at a smartphone or navigating the sub menus in the car’s touchscreen. With Autopilot engaged, these glances lasted longer, with 22% of glances lasting for more than two seconds.

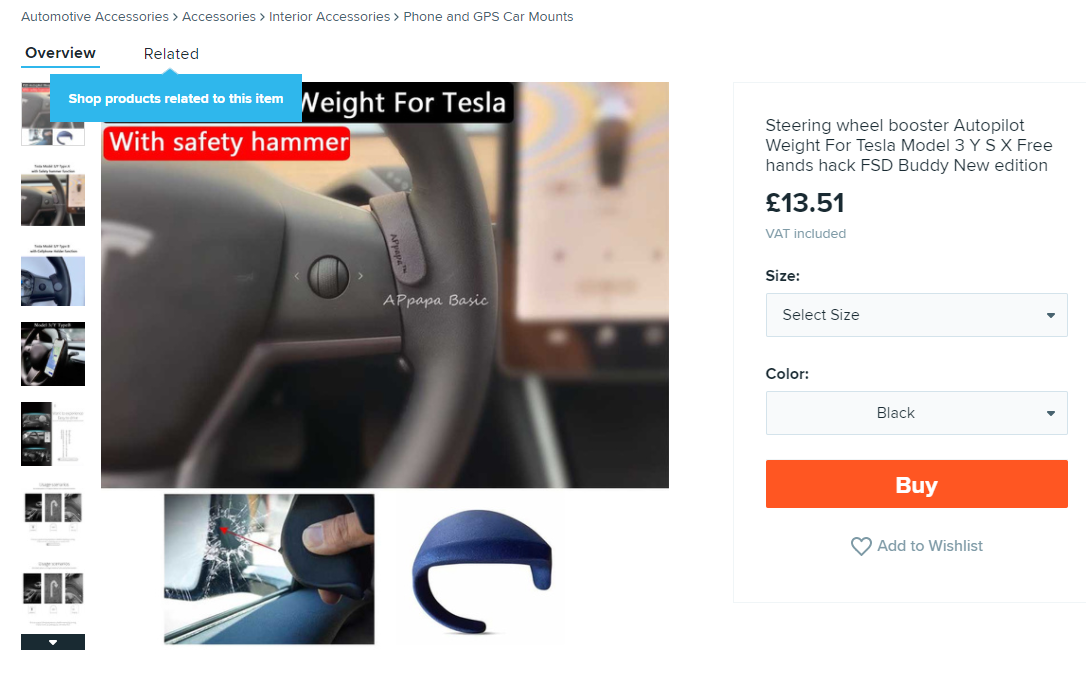

One issue with Tesla’s system is that, unlike some rivals’ driver assistance technology, Autopilot doesn’t come with a camera to monitor the driver’s head and eye movements. Instead it relies on a pressure sensor on the steering wheel to check that the driver is paying attention. It’s easy enough to grip the wheel while looking elsewhere, and MIT researchers believe that this could be a potential issue with the system.

Some drivers have been known to fit cheap weights to the steering wheel to trick the car into thinking a driver is paying attention; a few owners have been known to climb into the rear of the car with Autopilot engaged. MIT recommended adding an interior camera to help the vehicle’s software detect inattentiveness of the driver.

Safety body says Tesla should first address ‘basic safety issues’

Officials at the National Transportation Safety Board (NTSB) — the US equivalent of the Department for Transport — are already concerned with Tesla’s approach to implementing its driver assistance systems. The organisation’s head, Jennifer Homendy has stated that she’s unimpressed by Tesla essentially testing its tech upgrades on public streets, and that it should address “basic safety issues” before implementing new software updates.

She also said Tesla’s use of the term “full self-driving” was “misleading and irresponsible”, adding that Tesla “has clearly misled numerous people to misuse and abuse technology.” However, unlike the DfT, the NTSB has no authority over car makers to make changes; it an only produce research and make recommendations.

These calls follow a series of high-profile accidents involving Teslas, where the Autopilot software has encountered an issue, but the driver has been unable to remedy the situation. Last month the American National Highway Transportation Safety Administration launched an investigation looking at eleven serious incidents involving Tesla’s Autopilot system.

MIT’s research concluded that better driver management systems needed to be incorporated to help Autopilot — as well as rival self-driving technologies — recognise when a driver was being inattentive.

More education is also needed, so that drivers know that while Autopilot takes over the major driving functions, drivers must remain alert and in control at all times.

Tesla’s latest version of Autopilot is Version 10.0.1 but this has only been released to a small group of people so far. These ‘beta’ testers — drivers who will test the software for errors — will report back on issues with the set-up before the latest Autopilot is released to the broader public. Tesla intends to do this towards the end of September.

Tesla has always insisted that, despite some accidents, its data showed cars using Autopilot have fewer accidents per mile than cars being driven without its driver assistance technology.

Tweet to @Shane_O_D Follow @Shane_O_D

- After reading how a study reveals that Tesla drivers using “Autopilot” pay less attention to their surroundings, you might be interested to hear that firefighters attending a Tesla blaze had to use “forty times more water”

- An electric car battery heath check system wins innovation prize

- You may also be interested to know that the Lucid Air electric saloon has US-certified 520-mile range, overtaking Tesla